Major AI Coding Assistants Now Writing 41% of Corporate Code, Raising Security Questions

The invisible hand writing nearly half of your company’s codebase isn’t human anymore. AI coding assistants have quietly crossed a threshold that’s forcing enterprises to confront an uncomfortable reality: the tools meant to boost developer productivity are now creating as much code as they’re helping to write.

The 40% Tipping Point

Recent data shows AI coding assistants now contribute to 41% of code commits in enterprise environments where these tools are deployed. This isn’t just autocomplete anymore—these systems are generating entire functions, refactoring legacy code, and suggesting architectural patterns. What started as an experiment in developer productivity has become a fundamental shift in how software gets built.

The implications extend far beyond efficiency metrics. When nearly half of your codebase originates from AI suggestions, questions about code ownership, security vulnerabilities, and technical debt take on new urgency. Engineering leaders are scrambling to update policies written for a world where humans wrote every line.

Security Concerns Move to the Forefront

Enterprise security teams are raising red flags about AI-generated code entering production systems without adequate scrutiny. The core issue: AI coding assistants are trained on public repositories, which means they can suggest code patterns that include known vulnerabilities or reproduce copyrighted code snippets.

Several Fortune 500 companies have recently tightened their policies around AI coding tool usage. Some now require additional code review steps specifically for AI-generated code blocks. Others have implemented automated scanning tools that flag sections likely produced by AI assistants for enhanced security analysis.

The challenge isn’t theoretical. Security researchers have documented cases where AI coding assistants suggested authentication bypasses, SQL injection vulnerabilities, and insecure cryptographic implementations—all patterns learned from flawed code in their training data. When developers accept these suggestions without careful review, those vulnerabilities ship to production.

The Code Review Bottleneck

The rise of AI-generated code is creating an unexpected problem: code review processes designed for human output are struggling to keep pace. Developers using AI assistants can produce three to five times more code in the same timeframe, but review capacity hasn’t scaled accordingly.

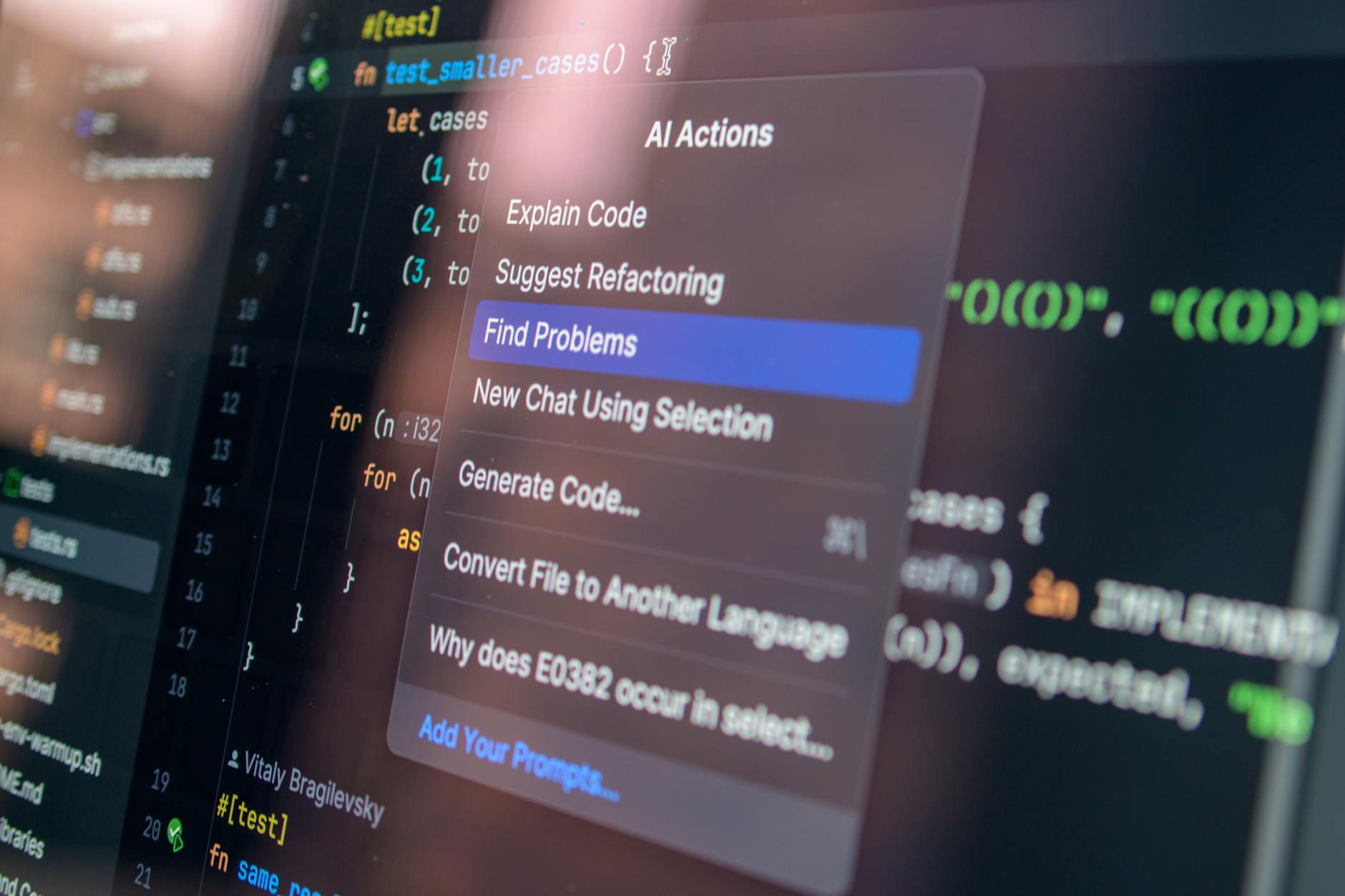

Engineering managers report that reviewers often can’t distinguish which portions of a pull request were AI-generated versus human-written. This opacity makes it harder to apply appropriate scrutiny. Some teams now require developers to annotate AI-generated sections, though compliance with these policies varies widely.

The quality of AI-generated code presents a paradox. While often syntactically correct and functionally adequate, it can lack the contextual awareness that comes from understanding the broader system architecture. Reviewers must work harder to spot subtle integration issues or architectural mismatches that a human developer might have avoided.

Policy Changes Across the Industry

Major technology companies are implementing new governance frameworks for AI coding assistant usage. These policies typically include requirements for disclosure when AI tools contribute significantly to a code submission, mandatory security scanning for AI-generated code, and restrictions on using these tools for security-critical components.

Some organizations have banned AI coding assistants entirely for certain projects, particularly those involving sensitive customer data or regulated industries. Others are taking a middle path, allowing the tools but requiring additional training for developers on their limitations and security implications.

The legal landscape remains murky. Questions about code ownership, liability for AI-generated bugs, and intellectual property rights are largely unresolved. Legal departments are advising caution, but the technology has moved faster than the frameworks needed to govern it.

Impact on Developer Roles

The productivity gains from AI coding assistants are undeniable, but they’re reshaping what it means to be a software developer. Junior developers report that these tools help them contribute faster, but some engineering leaders worry about skill development when AI handles routine coding tasks.

The role is shifting toward code curation and architecture rather than pure implementation. Developers spend more time reviewing, refining, and integrating AI suggestions than writing from scratch. This evolution favors engineers with strong system design skills and the judgment to evaluate AI output critically.

Job security concerns are real but nuanced. While AI coding assistants dramatically boost individual productivity, demand for software continues to outpace supply. The question isn’t whether developers will have jobs, but what those jobs will look like as AI handles an increasing share of implementation work.

The Path Forward

As AI coding assistants become embedded in enterprise development workflows, organizations must mature their approach beyond simple adoption. This means investing in security tools that can analyze AI-generated code, training developers to use these assistants effectively while understanding their limitations, and updating code review processes to handle the increased volume and different risk profile of AI-assisted development.

The 41% threshold isn’t an endpoint—it’s a wake-up call. Companies that treat AI coding assistants as just another developer tool, without addressing the security, quality, and governance challenges they introduce, are building technical and legal debt that will eventually come due. Those that proactively establish guardrails while embracing the productivity benefits will be better positioned for a future where the line between human and AI-written code becomes increasingly blurred.

The question is no longer whether AI will write a significant portion of enterprise code. It already does. The question is whether organizations can implement the oversight, security practices, and cultural changes needed to manage this new reality responsibly.