Anthropic Raises $4.5B Series D at $40B Valuation, Plans 50K H100 GPU Cluster

The AI infrastructure arms race just entered a new phase. Anthropic’s $4.5 billion Series D—raising its valuation to $40 billion—isn’t just another funding milestone. It’s a declaration that the cost of competing in frontier AI has reached levels that will fundamentally reshape who can play this game and what enterprises will pay for access.

The Real Story Behind the Numbers

Anthropic’s funding at this scale signals a market inflection point. The company’s plan to deploy a 50,000 H100 GPU cluster represents roughly $1.5 billion in hardware alone, based on current market pricing for NVIDIA’s flagship AI chips. This doesn’t account for power infrastructure, cooling systems, networking equipment, or the specialized facilities required to house such computational density.

For context, training runs for frontier models now routinely cost $100 million or more. The capital intensity of AI development has crossed a threshold where only companies with access to multi-billion dollar war chests—or hyperscaler partnerships—can remain competitive. This investment pattern mirrors historical infrastructure buildouts in telecommunications and cloud computing, where initial capital requirements created natural consolidation.

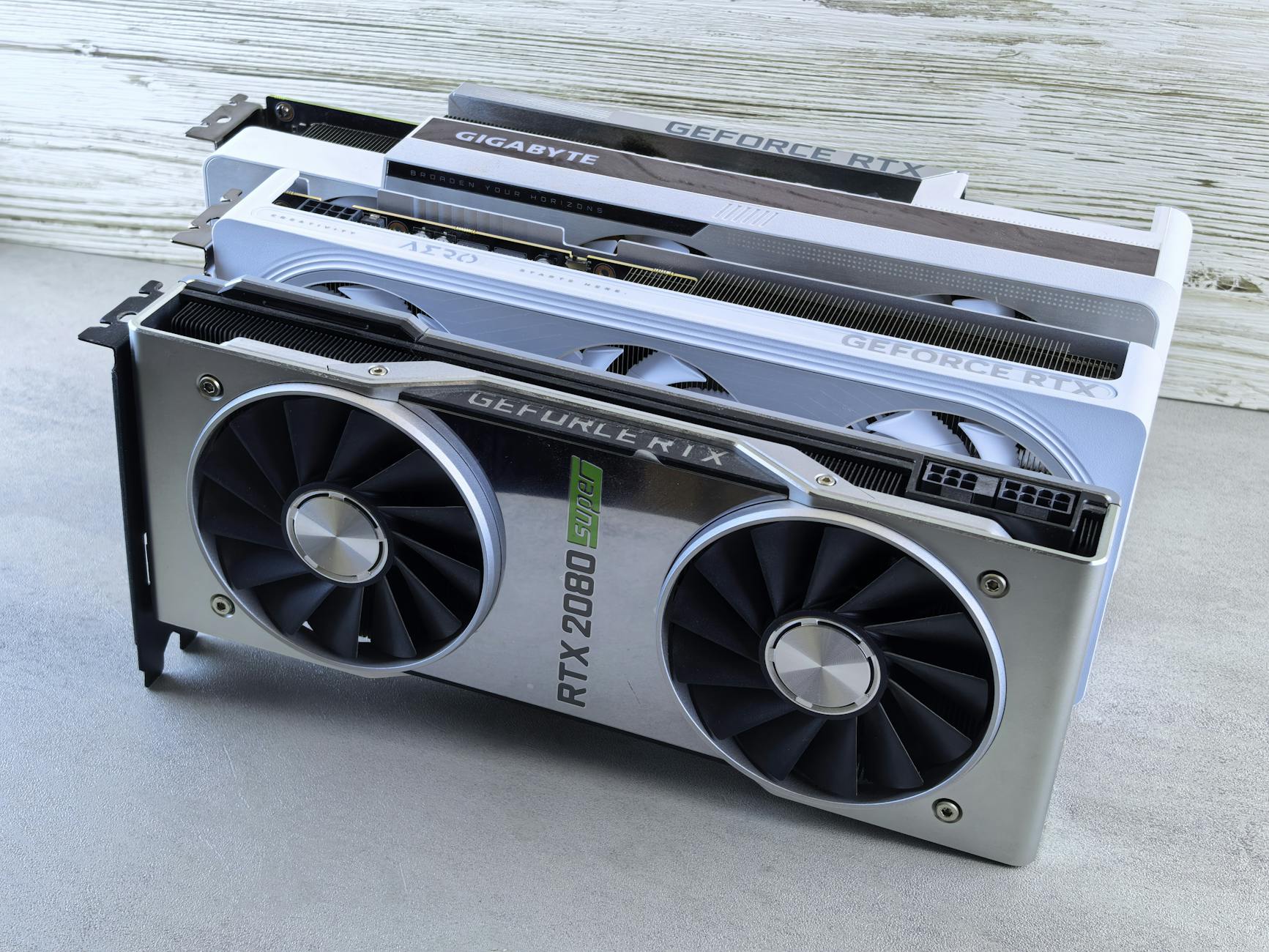

GPU Infrastructure as Competitive Moat

The 50,000 H100 GPU cluster announcement deserves particular scrutiny. Infrastructure at this scale isn’t merely about training larger models—it’s about iteration speed, experimental capacity, and the ability to run multiple research directions simultaneously. While competitors may match model performance on benchmarks, the velocity of improvement becomes the differentiator.

This infrastructure advantage compounds over time. Teams with more compute can test more hypotheses, explore larger architectural search spaces, and respond faster to emerging capabilities or safety concerns. The valuation premium Anthropic commands reflects not just its current technology position but the sustainable competitive advantage this computational capacity provides.

Enterprise technology leaders should note the strategic implications: the companies building these clusters are simultaneously potential vendors and competitors. The same infrastructure that powers Claude could eventually power vertical-specific models that compete directly with enterprise AI initiatives.

Market Consolidation Accelerates

The funding environment tells a clear story. While thousands of AI startups chase venture capital, the frontier model space is consolidating around a handful of players who can credibly commit to multi-billion dollar infrastructure investments. OpenAI, Google DeepMind, Anthropic, and a select few others operate in a different capital regime than the broader AI ecosystem.

This consolidation pattern affects founders across the stack. Building on foundation models from well-capitalized providers becomes the pragmatic path, but it introduces dependency risks and margin compression. The companies controlling frontier models effectively set the terms for an entire layer of the technology stack.

For investors, this dynamic creates a barbell strategy imperative: either back the capital-intensive foundation model players or focus on application-layer companies with defensible data moats and distribution advantages. The middle ground—companies attempting to compete on model quality without comparable resources—faces increasingly difficult unit economics.

Enterprise AI Pricing Under Pressure

The relationship between investment scale and enterprise pricing remains complex. Anthropic’s massive capital raise might suggest higher prices to justify returns, but competitive dynamics and economies of scale could push in the opposite direction.

Large-scale GPU infrastructure enables more efficient inference through better optimization, custom silicon integration, and amortization across broader customer bases. As foundation model providers scale, the marginal cost of serving additional API calls decreases. This creates potential for aggressive pricing to capture market share—a pattern familiar from cloud computing’s evolution.

However, the capital intensity also establishes a floor. Foundation model providers need revenue trajectories that justify their valuations and fund continued infrastructure expansion. Enterprise customers should expect pricing strategies that segment markets: competitive rates for high-volume, standardized use cases, but premium pricing for specialized deployments, fine-tuning, or guaranteed capacity.

The strategic question for enterprise technology leaders: build internal capabilities or commit to external providers? The answer increasingly depends on whether AI represents a core competitive differentiator or operational tooling. The capital requirements for frontier capabilities make in-house development viable only for the largest enterprises with AI-native business models.

The Compute Power Calculus

Behind every AI valuation lies a compute power assumption. Anthropic’s $40 billion valuation implicitly prices in expectations about model capabilities, market adoption, and competitive positioning—all of which ultimately derive from computational resources and the talent to deploy them effectively.

The 50,000 H100 GPU cluster represents approximately 1.5 exaflops of AI computing power. This scale enables training runs that were impossible 18 months ago and supports inference loads that can serve millions of enterprise users simultaneously. The infrastructure itself becomes a form of intellectual property—optimized software stacks, custom networking configurations, and operational expertise that can’t be easily replicated.

For the AI market broadly, this establishes new baseline expectations. Competitive foundation models will require comparable infrastructure investments, raising the stakes for any company attempting to challenge the current leaders. The result: further consolidation and increased pressure on companies without clear paths to this scale.

Strategic Implications for Market Participants

Investors should recognize that AI infrastructure spending has become a leading indicator of competitive position. Companies making billion-dollar GPU commitments are signaling long-term market intentions and creating barriers to entry that will shape industry structure for years.

Founders must navigate this landscape with clear-eyed realism about where defensibility exists. Application-layer innovation remains viable, but requires focus on proprietary data, distribution advantages, or vertical-specific expertise that foundation model providers can’t easily replicate.

Enterprise technology leaders face a narrowing window for strategic decisions. As foundation model providers scale and optimize, the cost-benefit analysis of internal AI development shifts. The question isn’t whether to use external AI services, but which providers to partner with and how to maintain strategic flexibility as the market consolidates.

The Anthropic funding round crystallizes a fundamental truth: frontier AI has become an infrastructure business with infrastructure economics. The winners will be those who recognize this reality and position accordingly—whether as builders, investors, or strategic customers. The $4.5 billion isn’t just capital for Anthropic; it’s a signal about what competing in AI actually costs, and who can afford to pay.